That means each node will have to store hashes for every row of the table. By default, it performs a “hash join” by creating hashes of the join key in each table, and then it distributes them to each other node in the cluster. When Redshift executes a join, it has a few strategies for connecting rows from different tables together.

If the query that’s failing has a join clause, there’s a good chance that’s what’s causing your errors. If it looks like you have plenty of space, continue to the next section, but if you’re using more than 90%, you definitely need to jump down to the “Encoding” section. Redshift should continuing working well even when over 80% of capacity, but it could still be causing your problem. Ideally, you won’t be using more than 70% of your capacity. (sum(capacity) - sum(used))/1024 as free_gbytes You can figure out which is the case by seeing how much space your tables are using by querying the stv_partitions table. This could be because the query is using a ton of memory and spilling to disk or because the query is fine and you just have too much data for the cluster’s hard disks. If you’re getting a disk full error when running a query, one thing for certain has happened-while running the query, one or more nodes in your cluster ran out of disk space. Make sure you know how much disk space you actually have We’ll share what we’ve learned to help you quickly debug your own Redshift cluster and get the most out of it.

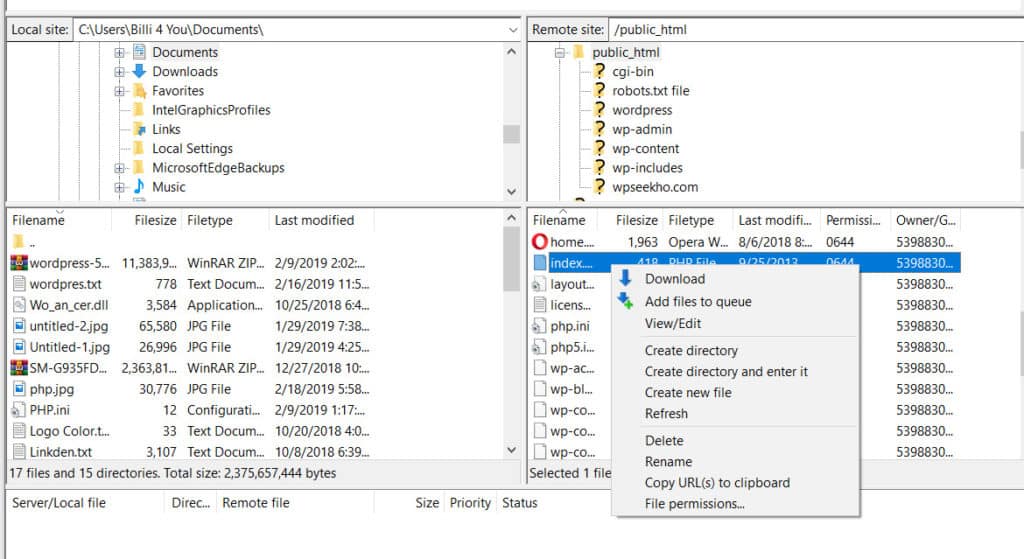

SHAREX FTP DISK FULL EROR HOW TO

Over the last year, we’ve collected a number of resources on how to manage disk space in Redshift. One area we struggled with when getting started was unhelpful disk full errors, especially when we knew we had disk space to spare. You can work faster with larger sets of data than you ever could with a traditional database, but there’s a learning curve to get the most out of it. You have new options like COPY and UNLOAD, and you lose familiar helpers like key constraints. When working with Amazon’s Redshift for the first time, it doesn’t take long to realize it’s different from other relational databases.